AI News Digest, April 9: China’s AI Hiring Agents Cut Recruitment Cycles 40% as Global Bias Lawsuits Rise

China’s HR tech market just set a new benchmark for AI hiring agents. DingTalk’s recruitment engine is posting 40% shorter hiring cycles for enterprise clients, Moka shipped an LLM-native HR product, and Beisen serves 70% of the Fortune China 500 with AI-driven talent management. Meanwhile in the West, a federal court moved a major AI screening bias lawsuit one step closer to class-action territory, with millions of applicants potentially in scope. And a leading recruitment platform pushed agentic interviewing and fraud detection into production with a direct SAP SuccessFactors integration. The message for founders and HR leaders: AI hiring agents are no longer a product demo. They are a procurement decision with legal exposure attached.

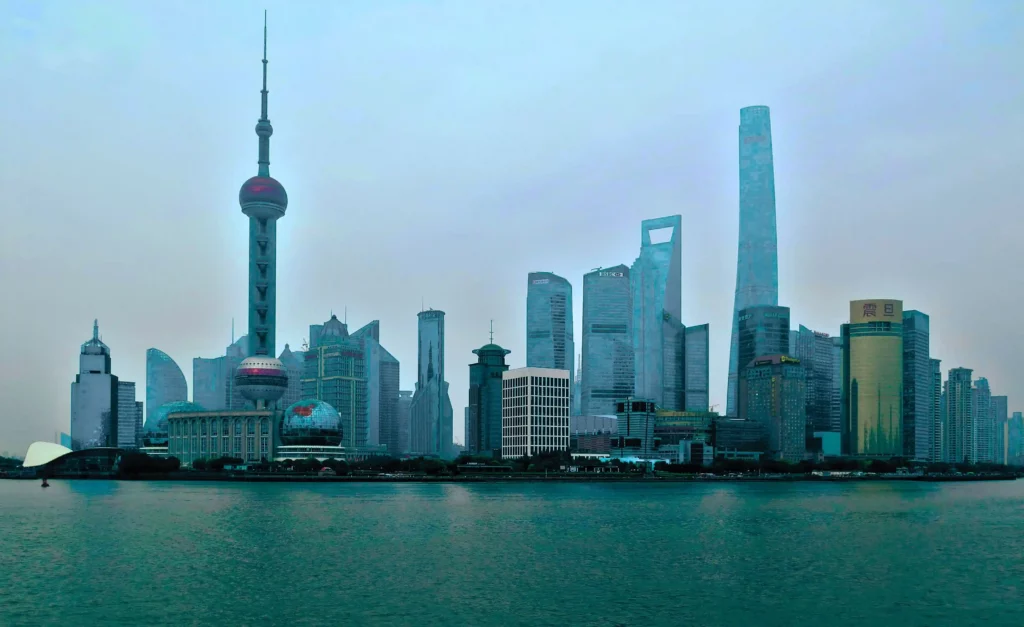

China’s AI Hiring Agents Already Rewrite the Recruitment Playbook

While Western vendors debate agentic AI roadmaps, China’s DingTalk has already shipped a full-stack AI recruitment engine that uses NLP to parse resumes in milliseconds, runs video interviews with micro-expression recognition, and triggers automated contract workflows the moment a candidate clears the hiring bar. Enterprise clients report recruitment cycles shortened by 40% and talent-match quality up 35%. (Source: CareerBoom AI / DingTalk)

DingTalk is not alone. Moka, a Beijing-based ATS, launched Moka Eva, an LLM-native HR product that handles resume screening, customised interview questions, and automated interview evaluations. Moka now appears in global ATS procurement guides alongside Western incumbents. Beisen, China’s largest integrated HR SaaS platform with over 6,000 clients including 70% of the Fortune China 500, continues expanding its AI-driven talent management suite. (Source: Tracxn)

For founders building distributed teams that include APAC hires, this is not just a regional story. Chinese AI hiring agents are setting the benchmark for speed-to-hire and automation depth. If your current ATS takes days to screen a batch of candidates, that 40% cycle reduction is the number your operations lead will eventually wave in a vendor review. Asanify’s AI applicant tracking guide covers how to benchmark your current stack against these capabilities.

The scale is staggering. China’s HR SaaS market now hosts over 200 funded startups building AI-native talent platforms, according to Tracxn data. These are not incremental feature updates bolted onto legacy systems. DingTalk, Moka, and Beisen built AI hiring agents from the ground up, which means their automation runs deeper and moves faster than Western incumbents retrofitting agentic layers onto decade-old architectures. If you are evaluating global hiring tools this quarter, Chinese vendors belong on the shortlist.

AI Hiring Agents Move to Production With SAP Integration

A leading recruitment platform announced on April 7 that its AI companion now handles agentic interviewing, candidate engagement, CRM-driven re-engagement, and applicant fraud detection in production. AI-recommended candidates are 100% more likely to be selected for interviews, according to the company. The release also deepens integration with SAP SuccessFactors, with plans for connected AI hiring agents across both platforms later this year. (Source: GlobeNewswire)

The fraud detection angle is worth watching separately. The platform demonstrated a prototype that combines behavioral signals, device intelligence, and network indicators to quarantine suspicious applications. For companies processing thousands of applications monthly, fake resumes and bot-submitted profiles are already a real cost centre. This is one of the first major ATS vendors to build detection natively rather than leaving it to third-party add-ons.

What to do: If your ATS doesn’t offer agentic screening or fraud detection, ask your vendor what their roadmap looks like. If the answer is vague, start evaluating alternatives. Our guide to AI in HR recruitment breaks down what to look for when comparing AI hiring agents across platforms.

AI Screening Bias Lawsuit Reaches Class-Action Territory

A federal judge in the Northern District of California conditionally certified the age discrimination claims in a landmark AI screening lawsuit, alleging that AI-powered candidate screening tools systematically disadvantaged applicants over 40, as well as those with disabilities and from certain racial backgrounds. The potential class spans millions of job seekers who applied through the platform since September 2020. (Source: SHRM)

This is the lawsuit that HR tech vendors hoped would get dismissed early. It didn’t. The core allegation is that AI hiring agents trained on historical workforce data replicate the biases embedded in that data, penalising older, disabled, or non-white applicants at scale. For any company using AI-assisted screening, the legal precedent here is direct. If your tool scores and ranks candidates using pattern matching on existing employee data, you inherit whatever biases that dataset carries.

Only 26% of applicants trust AI to evaluate them fairly, according to a Gartner survey. That gap between vendor confidence and candidate trust is where regulatory risk lives. If you are deploying AI hiring agents, document your bias testing now, before a lawsuit forces discovery. NYC’s Local Law 144 already requires annual bias audits for automated hiring tools, and more jurisdictions are watching this case to decide whether to follow suit. (Source: UNLEASH / Gartner)

Quick Hits

- SHRM’s State of AI in HR 2026 report puts AI adoption at 43% of organisations, up from 26% in 2024. Recruiting leads at 27% of use cases, followed by HR technology (21%) and L&D (17%). (Source: SHRM)

- The European Commission released the second draft of its AI content labelling Code of Practice, with finalisation targeted for mid-2026 ahead of the August 2 high-risk obligations deadline. Any HR tool generating candidate-facing content in the EU will likely need a disclosure layer. (Source: EU AI Act Newsletter)

- IndiaAI Mission is taking its BuildAI national pitch event to Chennai this month, spotlighting deep-tech AI startups for compute access, mentorship, and funding. (Source: IndiaAI)

What China’s AI Hiring Agents Mean for Your Recruitment Stack

Three takeaways from today’s digest. First, China’s DingTalk, Moka, and Beisen are producing speed and accuracy benchmarks that Western ATS vendors will need to match or explain. A 40% reduction in recruitment cycles is not a pilot metric. It is a production number from enterprise clients. Second, the legal ground under AI hiring agents is shifting fast. A major class-action certification means every vendor selling AI-powered screening now operates under the shadow of disparate-impact liability. Third, agentic interviewing and fraud detection have moved from demo to production, with SAP SuccessFactors integration making these tools accessible inside mainstream HCM stacks.

If your team is evaluating hiring tools this quarter, run your own bias audit before a regulator or a plaintiff’s attorney does it for you. Asanify’s guide to AI agents for HR walks through where autonomous workflows add value and where human oversight remains non-negotiable. For teams that want the broader toolkit comparison, the top AI tools for HR roundup is a good starting point.

Frequently Asked Questions

How are Chinese companies using AI hiring agents in recruitment?

Chinese platforms like DingTalk, Moka, and Beisen have integrated AI hiring agents across the full recruitment pipeline, from NLP-based resume parsing and video-interview analysis to automated contract generation. DingTalk reports 40% shorter recruitment cycles and 35% higher talent-match quality for enterprise clients. These platforms serve thousands of large employers and are increasingly appearing in global ATS procurement comparisons.

Can AI hiring agents create legal liability for employers?

Yes. A landmark AI screening lawsuit, which reached conditional class certification in March 2026, alleges that AI screening tools can violate federal age discrimination law by replicating biases in historical hiring data. Employers using AI hiring agents should conduct regular bias audits, document their testing methodology, and check whether local laws like NYC’s Local Law 144 apply to their tools.

What should HR leaders do before deploying AI hiring agents?

Run a bias audit on your AI screening tools before deployment, not after a complaint. Document your testing methodology, review the training data for demographic imbalances, and check whether your jurisdiction requires automated hiring tool audits. Benchmark your current ATS against platforms shipping agentic workflows, including Chinese vendors like DingTalk and Moka, to understand where the market is heading.

Not to be considered as tax, legal, financial or HR advice. Regulations change over time so please consult a lawyer, accountant or Labour Law expert for specific guidance.