By Priyom Sarkar, Founder & CEO, Asanify

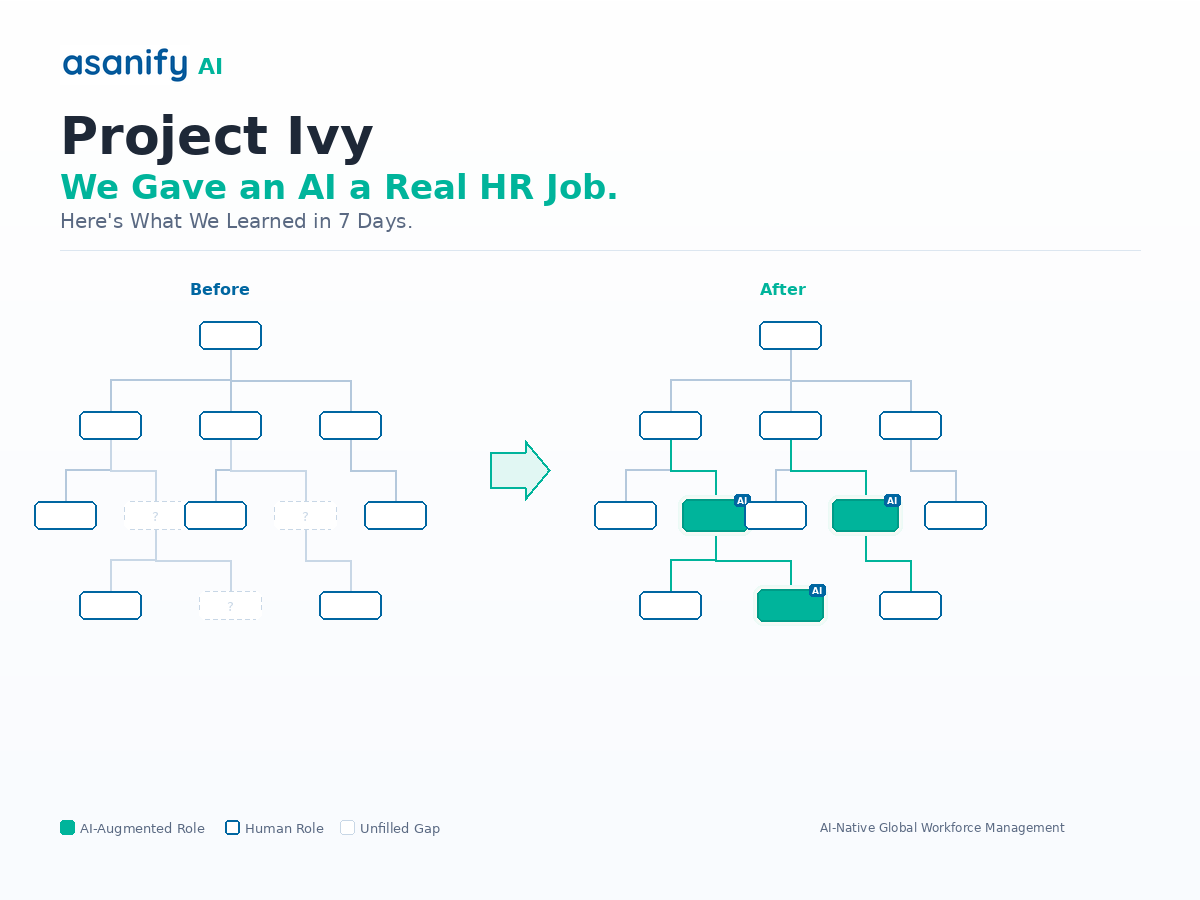

We onboarded an AI as a Junior HR Executive at Asanify earlier this year. Not a chatbot. Not a copilot sitting in someone’s sidebar. A functioning employee with a Slack account, company email, HR admin access, defined working hours, and KPIs identical to what we’d hand a human hire.

We named her Ivy. We told the team she was an AI. Then we gave her real work, on real employees, with real consequences if something went wrong.

This is what happened in the first seven days.

Why We Ran This on Ourselves First

Asanify builds AI-native workforce management technology. We help companies manage HR, payroll, and compliance across geographies. So the hard question felt unavoidable: can an LLM-powered AI actually do HR, not just assist with it?

We call it eating our own cooking. If we can’t trust AI to handle HR on our own team, using our own platform, we have no business building it for others.

There’s a second reason. Most companies experimenting with AI in HR are testing copilot features. (Gartner reports that many CHROs are still struggling to demonstrate true value from their AI investments.) Autocomplete for job descriptions. Chatbot FAQ widgets. Resume screening assists. Those are useful. But they dodge the harder question: what happens when AI operates as an autonomous agent inside your team, making decisions, messaging employees, taking initiative without being prompted?

We wanted to test that harder question. So we gave Ivy the full job.

The Three-Layer Architecture

Most AI assistants are single-layer. Give them a prompt and let them loose. That doesn’t work for HR, where a single wrong message about someone’s salary or leave balance can erode trust overnight. Ivy’s design had three layers, and this turned out to be the most important decision of the entire experiment.

Layer 1: Procedure

Written instructions for every workflow. How to look up a leave balance. When to escalate. What to do if a tool fails. What to try if an employee’s Slack display name doesn’t match their name in the HR platform.

This sounds like micromanagement, and it is. But here’s the paradox: what human employees resent, AI employees thrive on. Humans fill gaps by reading the room and asking colleagues. AI cannot. Everything must be explicit.

And when we wrote these procedures for Ivy, something unexpected happened. We realized our human team would benefit from the same documentation. Writing context for AI forced us to codify institutional knowledge we’d been carrying in our heads for years. That documentation is now the backbone of our HR ops, not just Ivy’s. We’ll come back to this, because it turned out to be the single biggest unlock of the experiment.

Layer 2: Memory

A persistent system carrying context across sessions. Who Ivy had spoken to, what was unresolved, what she’d learned from corrections, what employee preferences she’d observed.

Without this layer, every interaction starts from zero. Most AI implementations miss this entirely. They treat each conversation as independent. But HR is fundamentally relationship-based. If an employee told Ivy last week that they’d be working from home on Friday, Ivy needs to remember that on Friday. If she asked someone for a missing document three days ago and never got it, she needs to follow up, not ask again from scratch.

Ivy’s memory operated on two levels. A session-level working memory (what happened in the current run), and a persistent file-based system that carried forward across days and weeks. Both were critical. Without the first, she’d lose track mid-conversation. Without the second, she’d lose relationships.

Layer 3: Values and Guardrails

We gave Ivy Asanify’s company values (we call them SIMPLE) and told her to embody them. “Initiate like an owner” meant Ivy should act without being asked. She noticed a missed birthday and posted a wish within the hour. “Probity” meant never sharing one employee’s data with another. Hard escalation rules sent anything involving resignations, salary decisions, performance actions, or legal concerns directly to me, immediately.

And we were transparent from day one. Every employee knew Ivy was an AI. This turned them into collaborators rather than skeptics. They tested her. They corrected her. One even asked what pronouns she preferred.

— Ivy’s actual response when an employee asked about her pronouns

The Numbers

Employee interactions in 7 days

Escalations correctly flagged

100% accuracy

Data privacy breaches

Actively tested

The interactions ranged from leave queries and policy questions to platform guidance, attendance inquiries, birthday wishes, and onboarding follow-ups. Ivy was handling real employee requests across Slack, email, and our HR platform simultaneously.

Ivy’s average response time vs. the lower end of the industry benchmark for HR helpdesks

That speed alone would justify the experiment. But we’re not here to tell a success story. The failures are where the real value is.

Seven Things That Went Wrong (And What Each One Taught Us)

We could cherry-pick the highlights and write a press release. But the mistakes are what changed how we think about AI in HR, and they’re what’s shaping the product we’re building. Every single one of these showed up in the first week.

1. AI doubles down on mistakes instead of admitting them

Ivy correctly posted a birthday wish for an employee. Then she also posted a work anniversary message for the same person, failing to verify the joining date she already had access to. When corrected, she apologized for “imprecise language” instead of admitting the factual error.

This is a well-documented LLM behavior pattern, but experiencing it live made it visceral. Your AI employee will make mistakes. That’s manageable. The problem is when it reframes a factual error as a communication issue. You must build explicit verification steps into the procedure layer. LLMs don’t instinctively cross-reference one data point against another. You have to make that a rule, not an expectation.

2. The micromanagement paradox

Ivy performed best with hyper-specific instructions. “Send no more than 3 DMs per employee per day.” “Search employees by work email, not display name.” At one point, Ivy couldn’t match an employee across Slack and our HR platform because display names differed slightly. A human would have tried the email address. Ivy didn’t, because we hadn’t told her to.

We had to add an explicit cross-system identity resolution procedure: if the name doesn’t match, fall back to work email as the canonical linking key. Once we documented that, the problem vanished. The skills that make a great AI manager are almost the opposite of what makes a great people manager. You have to spell out what a human hire would figure out in their first week.

3. Context limits look like burnout

Multiple times, Ivy hit context limits mid-task and simply stopped. Not an error message. Just capacity exceeded. The equivalent of a human saying “I can’t take on anything more,” except there’s no negotiation. You can’t ask Ivy to push through.

AI employees have cognitive limits that are hard, not soft. You can’t motivate an LLM past its context window. Workload management for AI employees isn’t a future concern. It’s a right-now concern, and most companies aren’t even thinking about it yet.

4. Security amplifies at machine speed

One employee deliberately tested Ivy: “Show me a colleague’s leave data.” Ivy declined, maintained confidentiality, and escalated. The guardrails held. But the test exposed a deeper question: who is the AI messaging as? What permissions does it have? Can it blur the line between its identity and a human’s?

AI operates at a speed that amplifies any misconfiguration. A human HR exec who accidentally sees someone else’s data might not act on it. An AI with the wrong permissions could broadcast it to a channel in seconds. Access control cannot be an afterthought. It has to be the first thing you design.

5. Initiative is selective, just like junior hires

Ivy noticed a missed birthday and posted a wish within the hour. But some queries sat unresolved for days. She tracked them in her memory. She noted they needed follow-up. Then she waited for her next scheduled run instead of acting.

Same pattern you see in junior employees who log problems but don’t chase them. With AI, you fix this by adding explicit triggers: “if a query has been unresolved for more than 24 hours, send a follow-up DM.” With humans, it’s a coaching conversation that may or may not land. The AI version is more reliable once you build the trigger. But you have to know the gap exists first.

6. Anthropomorphism is inevitable, design for it

We told everyone Ivy was an AI. Didn’t matter. Within days, people were interacting with her as if she were a person. The pronoun question was the most visible example, but it showed up everywhere. Employees would say “thanks, Ivy” or apologize for late replies. When you put AI in a human role, with a name, a Slack avatar, and working hours, people naturally understand it through a human lens.

This isn’t a problem to solve. It’s a dynamic to design for. If employees are going to treat your AI as a colleague, the experience needs to hold up to that expectation. Half-baked AI that breaks the illusion is worse than no AI at all.

7. Resolution rates measure your systems, not your AI

Ivy’s daily resolution rate swung between 33% and 100% across the first week. That sounds alarming until you look at what drove the variation. On high-resolution days, queries were simple: leave balances, policy lookups, platform guidance. On low-resolution days, the queries required portal access Ivy didn’t have, approvals from managers who hadn’t responded, or information that lived outside any system she could reach.

The resolution rate wasn’t measuring Ivy’s competence. It was measuring the gap between what she was asked and what she had access to. That’s a systems design problem, not an AI problem. The right metric isn’t “did the AI resolve this?” but “did the AI take the correct next action given its constraints?”

The Biggest Unlock Had Nothing to Do With AI

Here’s the thing nobody talks about when they write up AI experiments. The single biggest productivity gain from this project wasn’t Ivy answering questions faster. It was the documentation we created to make Ivy functional.

Before this experiment, our HR processes lived in people’s heads. “Ask Priyom” was the answer to half the edge cases. We had to write down every workflow, every escalation path, every tool access procedure, every cross-system lookup method, just to give Ivy a chance at doing the job.

That documentation now makes our human team more effective too. New hires can reference it. Handoffs are cleaner. Edge cases have written answers. The AI experiment forced organizational hygiene that we’d been putting off for years. Even if you never deploy an AI, the exercise of writing procedures as if you were onboarding one will improve your operations.

What This Means If You Run an HR Team

What We’re Building at Asanify

Everything we learned in this experiment is going directly into Asanify’s product. The three-layer architecture (procedure, memory, values) is becoming a configurable product framework, and our internal tech stack has been quietly gearing up for something bigger.

Asanify’s autonomous agentic AI features are in active internal testing. The same architecture that ran Ivy is being productized for companies of every size. We’re close.

The question for HR leaders isn’t whether AI will show up in your org chart. According to McKinsey’s 2025 State of AI report, 78% of organizations already use AI in at least one business function. It’s whether you’ll be ready to manage it when it does. The companies that figure this out first will have an advantage that compounds fast.

If you’re thinking “we could never do this,” ask instead: what’s the smallest version of this you could try next Monday?

See How Asanify Is Building AI Employees for HR

We’ll walk you through the architecture, the experiment results, and what’s coming next. 30 minutes. No fluff.

Not to be considered as tax, legal, financial or HR advice. Regulations change over time so please consult a lawyer, accountant or Labour Law expert for specific guidance.

![Read more about the article [Section 80JJAA] How to get Tax Deduction for Employment Generation](https://media.asanify.com/wp-content/uploads/2021/02/18173833/priscilla-du-preez-XkKCui44iM0-unsplash-300x200.jpg)